If you are currently using version 5.x.x, we advise you to upgrade to the latest version before the EOL date. You can find the latest documentation here.

Introduction to Collection Agent

Overview

What is the Collection Agent?

The Collection Agent is a Geneos component that uses dynamic plugins to do the following:

- Discover what is available to monitor.

- Collect metrics, logs, and metadata about the monitored applications.

- Collect dimensional data.

The collected metrics are displayed in Geneos dataviews following the configured Dynamic Entities mappings. The collected logs can be monitored as streams by setting up an FKM sampler in the Netprobe. For more information on how to set up your Collection Agent, see Collection Agent setup.

Collection Agent plugins are separate from the Collection Agent binary. Therefore, Collection Agent plugins can be downloaded and upgraded independently of the Collection Agent itself. For more information on Collection Agent plugins, see Plugins.

Collection Agent is written in Java, so it is important to have the right version of Java installed on the host running it.

For more information on supported Java versions and platforms, see the 5.x Compatibility Matrix.

Why do we need the Collection Agent?

The Collection Agent plugins have many advantages. One great advantage is that there are dimensions attached to every datapoint. This dimensional data is metric, log, and event data that is self-describing. Because the data is self-describing, it requires far less configuration in Geneos to set up these plugins compared to Netprobe plugins.

Examples of dimensions for a metric might be publishing an application’s name, the host name, its IP address, and so on. This information will be used to show the metric in the right place in the Active Console State Tree.

Collection Agent data items are also strongly typed and have a unit of measure, which makes aggregation and analytics on the data much more efficient.

A Collection Agent and Netprobe can be used together to dynamically collect, identify, and visualise application metrics in a constantly changing environment.

Additionally, this solution makes some improvements over standard Netprobes:

- Allows applications to push metrics and logs ensuring that nothing is lost. The Collection Agent's log processing plugins will persist logs on disk until it publishes them to the Netprobe, thus ensuring no data is lost in case the Netprobe is unavailable.

- Uses a dimensional data model which retains more information about the data being sent.

Note: Beginning Geneos 5.1, the Collection Agent is included in the Netprobe binaries for and generic platforms.

Components

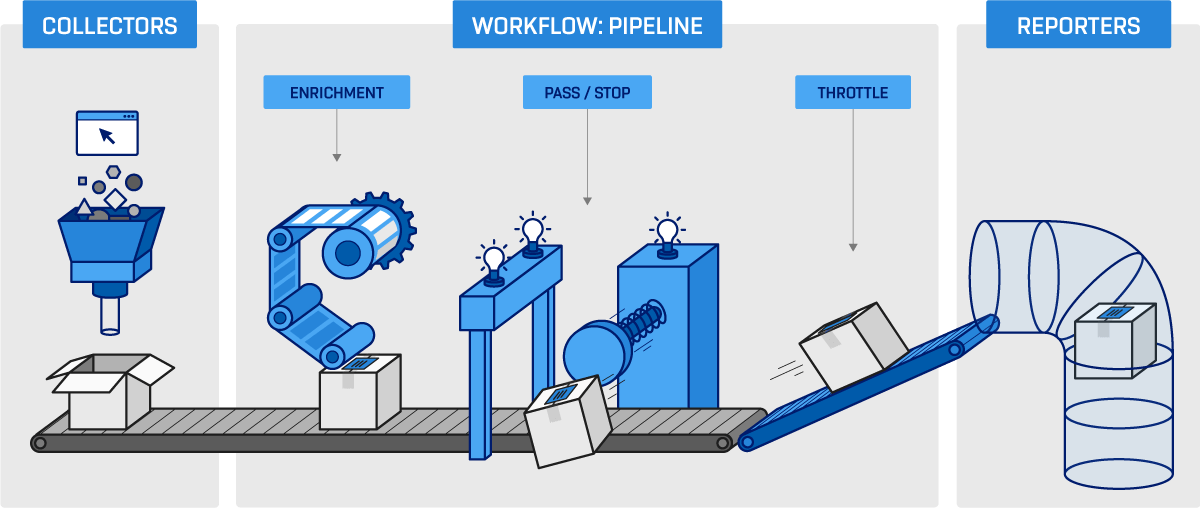

The Collection Agent gathers application data points and reports them to Geneos for visualisation. An instance is deployed to each host running monitored applications.

Collection Agent has the following sub-components:

- Collectors

- Workflow pipelines

- Reporters

Collectors

Collectors collect application-specific data points from one or more applications that are monitored. They are packaged as JAR files. Core Collection Agent collectors are offered out of the box.

There are three collectors:

- StatsD — receives metrics from instrumentation libraries. There are two libraries that send data that can be consumed by this collector:

- StatsD client Java library

- StatsD client Python library

- Kubernetes metrics collector (

KubernetesMetricsCollector) — listens to Kubernetes API. - Kubernetes log collector (

KubernetesLogCollector) — locates and reads application logs.

Workflow pipelines

A workflow is composed of pipelines, which have processors to perform operations on the data collected from the applications and services. A workflow receives data from collectors, enriches this data, and sends it to a reporter. Filtering and enriching of data is configured per pipeline. There is a pipeline for each class of data:

- Metrics

- Logs

- Events

Once data has been processed a pipeline then sends data to a reporter. One pipeline can send data to one reporter.

Each workflow pipeline is backed by a store in which data points are buffered before being sent to a reporter. Each store is configured with a maximum capacity. The store can be either in memory or on disk

Processors

A pipeline configuration is composed of processors. When data crosses the boundary from a collector, it becomes a message. Messages are then modified by processors in stages as they move through a pipeline.

There are four types of processors:

- Enrichment processors

- Pass filter processors

- Stop filter processors

- Throttle processors

Reporters

Reporters publish data from workflows. For example, data can be published to Geneos where it is visualised.

There are three reporters:

- Logging reporter — logs data points to

stdout. - TCP reporter — allows the Collection Agent to communicate with the Netprobe.

- Kafka reporter

Multiple instances of the same reporter can exist.

Plugins

The functionality of the Collection Agent can be extended using plugins. A Collection Agent plugin is a JAR file that contains one or more collector, processor, and reporter components that facilitate data collection from a specific source.

The following plugins are available:

- plugin — provides a StatsD server that allows custom metrics to be collected from any application instrumented with a StatsD client.

- Monitoring plugin — provides a suite of collectors and processors necessary for collecting logs, metrics, and events in a Kubernetes or OpenShift environment.

- Prometheus plugin — gathers real-time metrics and alerts from the Prometheus server and Alert Manager.